2.1 Presentations Saturday. June, 17th.

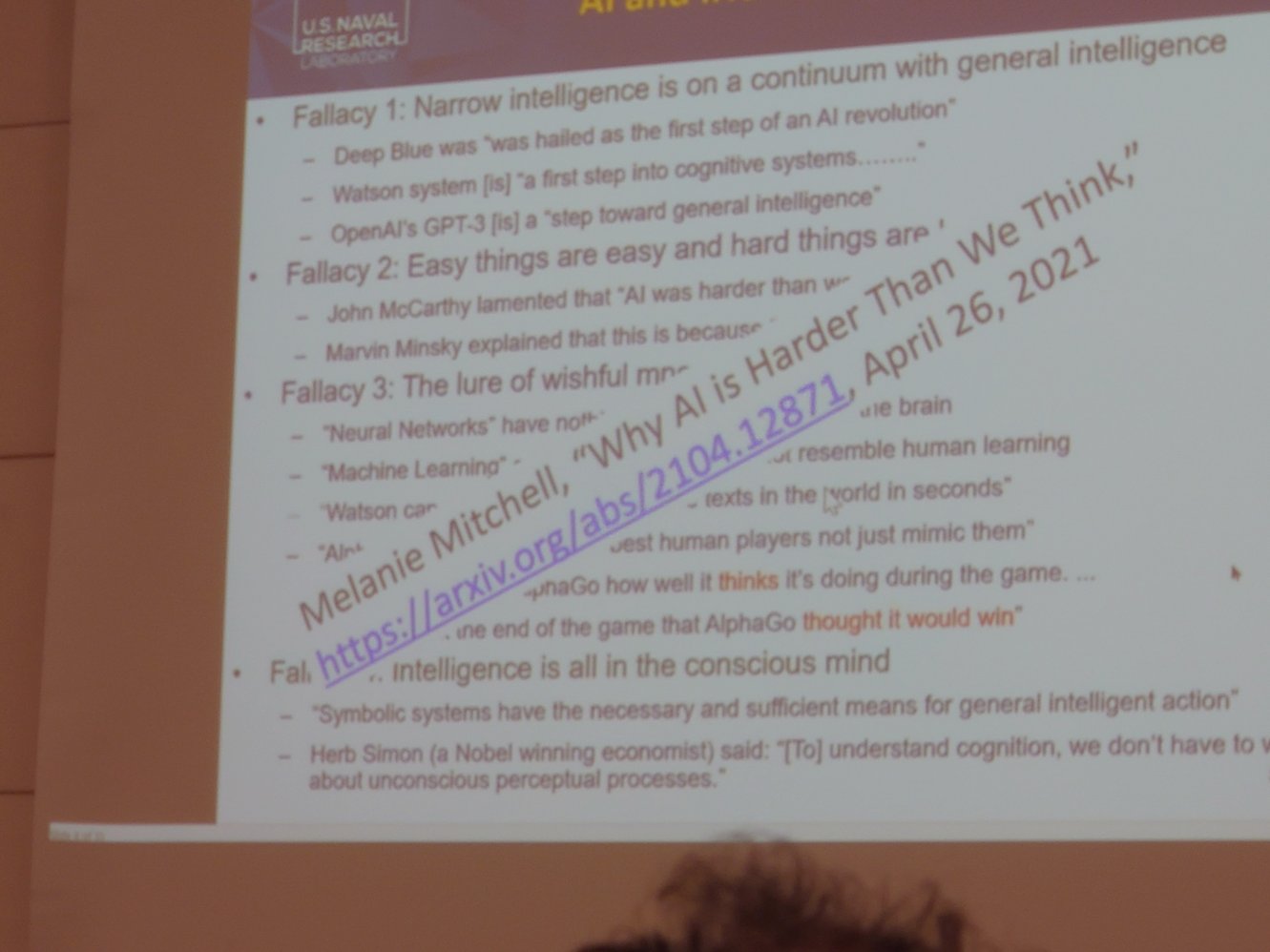

2.1.1. Time To Move On: AI And Cognitive Science Need A Divorce.

Tom Ziemke,

LiU, talked about ''

Time To Move On: AI And Cognitive Science Need A Divorce''.

With good points about the philosophical ''

other minds'' problem.

I.e. the ''

other minds'' problem is unsolvable.

We (humans) do not know what other (human) minds are

really like (what they experience).

And, indeed, people have a tendency towards antropocentric anchoring.

Looking at, and interpreting, other (natural and artificial) minds as being similar to humans minds.

Which is rather problematic as we have ''

no reason to assume that AGI will be human-like''.

Saying that cognition

is computation hasn't helped eiher.

I.e. it indicates that natural and artificial minds are more similar than they probably are.

Very relevant points indeed.

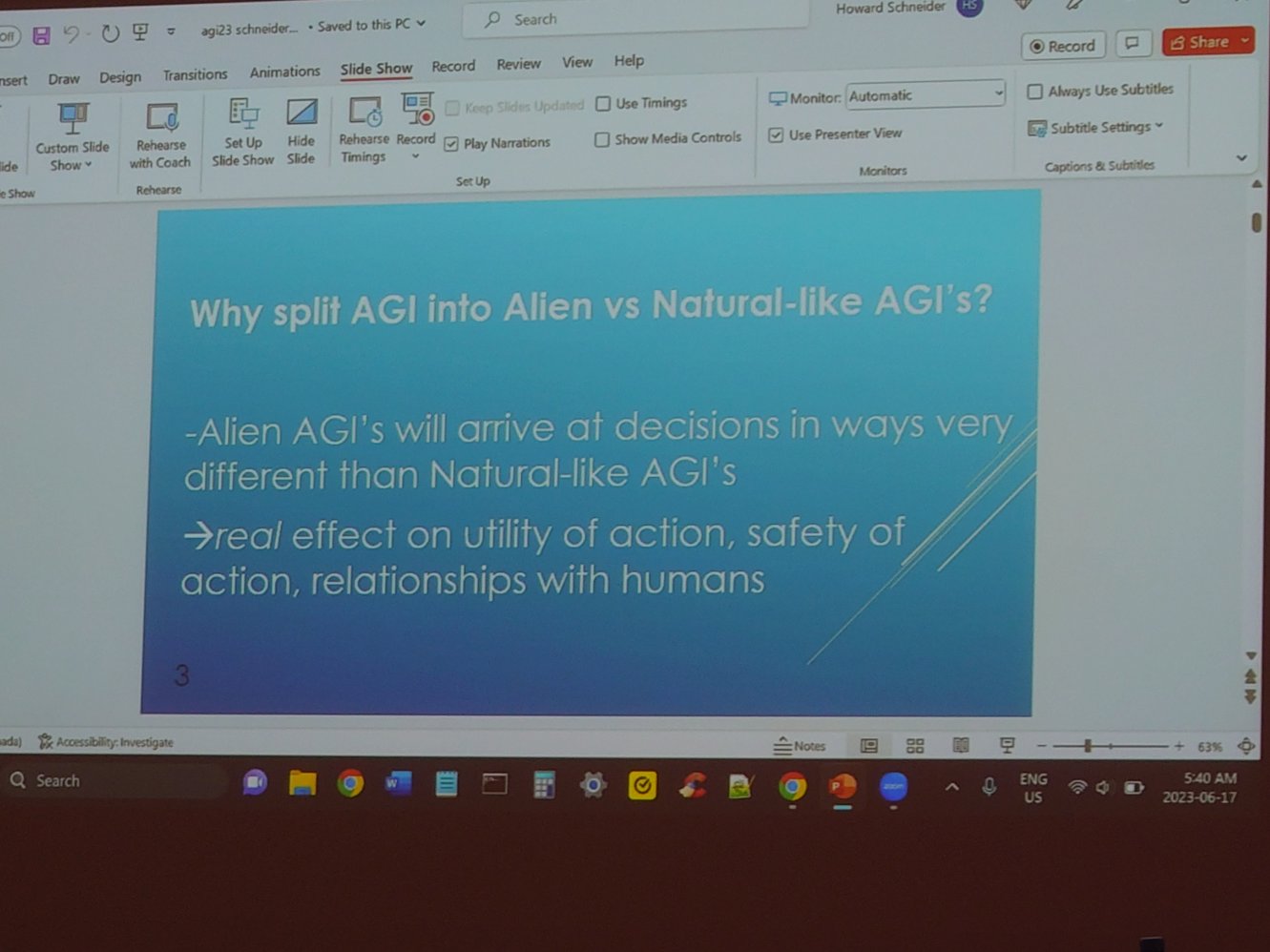

2.1.2. Alien versus Natural-like Artificial General Intelligences.

Howard Schneider, talked about ''

Alien versus Natural-like Artificial General Intelligences'',

ACM (digital library).

He started with a definition:

An AGI which is not a natural-like AGI is termed an alien AGI [1].

Indeed:

It may be, that alien AGIs’ understanding of the world is so different from a human understanding that to allow alien AGIs to do tasks done originally by humans, is to eventually invite strange failures in the tasks [1].

Certainly, what it means to

actually understand is in itself a tricky concept.

What is our understaning

grounded in? What are symbols really

grounded in?

How does a natural system understand, and ground symbols, and how could an artificial system understand (by grounding symbols...)...?

Schneider writes in ''

An analogical inductive solution to the grounding problem'' [

2]:

Harnad notes that the symbol grounding problem essentially is how symbols get their meaning, and thus related to what actually is meaning. When symbols are being operated on in a cognition-like module, the symbols are being manipulated following rules which are based on the symbols shapes, so to speak, rather than their meaning [2].

Harnad (1994) does write, ''my guess is that the meanings of our symbols are grounded in the substrate of our robotic capacity to interact with the real world of objects, events and states of affairs that our symbols are systematically interpretable as being about'' [2].

Certainly, without real understanding, (alien) artificial systems can look

at problems,

that natural intelligence can easily solve, and come up with different (wrong) conclusions.

E.g.

A robot needs to cross a deep river.

It sees a river filled with leaves (which it has no previous experience with,

understanding or knowledge of other than to recognize them). It can only

say that they are ''solid08'' objects [1].

Through the use of natural-like AGI (causality, analogy, physics and so on),

a human-like AGI is able to reason

that it is dangerous to cross the river.

(Alien) ChatGPT doesn't work this way though:

If we give the same problem to chatGPT (here considered an alien system),

and tell it that the river is filled with ''leaves'', it can use its super-human

knowledge of much of what has ever been written, to conclude that it is dangerous

to cross the river [1].

(Alien) ChatGPT can't reason about unknown objects.

But, if we tell chatGPT that the river is filled with ''solid08'' objects,

and we describe what ''solid08'' objects are, it is not able to make a decision

about crossing the river, as it has not read about ''solid08'' objects before [1].

Obviously!